Which Crisis in Education?1

Gene V Glass I want to argue today that there is a crisis in our education system. But “poor achievement” and “dropouts” is not its name. The crisis is not that our children are ignorant of trigonometry or can’t parse dependent clauses. Far more critical is that our children don’t know that McDonalds, and Phillip Morris, and Anheuser Busch are killing them—not intentionally, but incidentally as a side effect of hooking them on sugar and fats and nicotine and alcohol. But the real crisis is not even about what young people know or don’t know. We were once not long ago told that the “crisis” was that Japan was eating our economic lunch. Now we are told that young men and women on the Asian subcontinent taught to speak like Nebraskans are taking all of our telemarketing jobs from us—that “outsourcing” is the new crisis that threatens America’s young people. I submit that this is all baloney. The crisis in education has nothing to do with achievement, test scores, dropouts or any of the other stuff we are being told. Think back to the Presidential debates, still fresh in our memories, where the answer to every economic woe was to test the heck out of second graders. This is a pernicious and ridiculous type of thinking about education that is ruining the lives of children, corrupting the curriculum, and making the profession unbearably demeaning to most teachers. America’s schools have never in their history housed such bright, intelligent and high achieving students and teachers. National Assessment of Educational Progress results have never flagged in the 40-year history of that uniquely believable index of student performance. And yet we have been told that our students are nearly the dumbest in the world when it comes to science and math. This is pure poppycock. Of all the nations tested in the international assessments of math and science, the U.S. students were on average at least a year younger than most nations at the time of testing (the “last year” of secondary school comes at different times in different nations), they were the only nation not taught in the metric system (the system used in the tests), and they were one of four nations which chose not to allow the use of calculators. And yet, when you compare Finland to Connecticut—at least a plausible comparison—the U. S. performs at the top of the world. Let us stop talking about a crisis of academic failure—except that which is self- induced by irrelevant tests with idiotic standards.3 To understand the real crisis in American education, we have to go back 100 years—to 1905 and a German chemist by the name of Haber, whom no one now knows. Fritz Haber invented the process of taking nitrogen from the air and combining it with hydrogen to produce ammonia, which immediately became the cheap ready source of artificial fertilizers. This mundane discovery remade the world in the next five decades. Now one farmer by using cheap fertilizers could produce what ten farmers used to produce. Needing fewer farmers, the great rural to urban migration began in Europe and the U.S. Cities burgeoned; tall buildings grew, and suburbs sprawled. But the most important cultural change in all this was that in the first fifty years of the 20th century we shifted from being a “pro- natal” culture to an “anti-natal” culture. Whereas in the 1800s the birth of a child was seen as an economic asset—two more hands to help on the farm—by 1920, the majority of families considered the birth of a child to be an economic liability—another mouth to feed, teeth to straighten, and school clothes to buy until age 18. When the “pill” was invented in 1950, we truly gained the wherewithal to limit family size. Societies have used many means of limiting population growth throughout history. When the population strained the food supply, infanticide and homosexuality were practiced to reduce the surface population. We are too enlightened to resort to the former, and our contemporary celebration of the latter is less a badge of our moral maturity—as some would have it—than it is a reflection of the deeply anti-natal value system of the rich industrialized nations. In 1983, we were told that because of the failure of our schools, we were a Nation at Risk, and the rhetoric of “crisis” took hold of our conversation. Not coincidentally, in the 1980s demographers announced to the middle class descendants of white northern Europeans in America that their birth rate had finally dropped below replacement levels—population growth in America and the Biblical injunction to be fruitful and multiply were being upheld by immigrants and poor ethnic minorities. It is at this point that the series of phenomena that some now label the “crisis” in education truly kicked in. An aging, wealthy, property-owning white middle class in America no longer wishes to support public education. The policy inventions of the past 25 years are all of a stripe: privatize education, make those who send their children to school pay for it themselves…vouchers, charter schools, tuition tax credits. We have No Child Left Behind, and for those in danger of falling behind, there’s money to purchase tutoring from private businesses, owned by white middle class stock holders. And where we—the dominant class—can not cut our expenditures for education, we want to separate our children from the threats we imagine are posed by the children of the underclass. White-flight charter schools are celebrated for their “high standards.” And make no mistake, there are two charter schools systems in this state—one for the rich and one for the poor. Tuition tax credits are used to hold down private school tuition, until the glorious day when vouchers take over the entire cost. There is a crisis today in American public education, and it is 100 years in the making. The powerful are no longer willing to pay for the education of “other people’s children.” And undeniably, those other people are recognizable by the color of their skin, the shape of their eyes, or the texture of their hair. The crisis in our schools arises from the loss of the sense that we are all in this together. It is expressed in the growing sense that America is made up of “us”— the well-off—and “them”—the car-stealin’, drug dealin’, non-English speakin’ others. We have lost the belief that ultimately we will be judged by how we have taken care of the least among us. Notes 1. Remarks delivered at the Fall Forum of the American Association of University Women (AAUW) and the Women’s Studies Department, Arizona State University – West, Crisis in Education: A Call to Action on November 6, 2004 at Arizona State University-West. 2. Berliner, D. C. & Biddle, B.J. (1995). The Manufactured Crisis: Myths, Fraud, and the Attack on America's Public Schools. Reading, MA: Addison-Wesley. |

Tuesday, March 17, 2026

Which Crisis in Education?

Sunday, January 11, 2026

Leonard Waks Shares His Thoughts on the Sorry State of US Higher Education

| A recent exchange with my friend Len Waks concerning academic publications prompted his observations on the nation's higher education system. ~GVG |

|

A recent article in The Conversation argues that academic publishing is facing a “crisis” of enshittification. The authors point to several interrelated problems: the sheer volume of publications makes it difficult to identify authoritative work; fraudulent journals circulate hoax papers and pirated content; and profit-driven publishing models distort scholarly incentives. The result is a system overwhelmed by quantity, stripped of its original purpose, and increasingly disconnected from the advancement of knowledge.

This crisis in publishing, however, is only one manifestation of a much deeper and longer-standing problem—one that begins not with journals but with mass higher education itself. Depending on how the term is defined, I am generally opposed to mass university education, precisely because it degrades the entire system. The pattern is familiar from secondary education. Consider the “algebra for all” movement. Researchers noticed that students who completed ninth-grade algebra tended to do better later on than those who did not. From this correlation, policymakers drew an invalid inference: that every student should be required to take ninth-grade algebra. The result was predictable. Algebra classrooms were filled with students who could not reliably perform four-function arithmetic. To accommodate them, teachers devoted large portions of class time to remedial arithmetic. The students who needed remediation still did not learn algebra—and, crucially, neither did the students who were already prepared, whose time was consumed by material they had already mastered. The same logic now governs the arts and sciences curriculum at the university level, with even more serious moral consequences. A liberal arts curriculum is traditionally shaped for students above roughly the first standard deviation—those capable of sustained attention, close reading, interpretive nuance, and reflective discussion. Expand access from roughly 16 percent of the population to 40 percent, and many of the new entrants are simply not instructionally ready. They cannot read closely; they cannot engage in the kind of textual analysis that lies at the heart of liberal education. Faculty, understandably, try to respond humanely. They alter the curriculum so that these students can get something out of it—reading snippets instead of full works, replacing textual analysis with informal discussion. Some students benefit in limited ways. But what they receive is not a liberal education. They do not memorize or emulate exemplary passages; they do not learn to write in response to demanding texts; they do not examine literary characters as moral examples, because they lack the interpretive capacities required to do so. Meanwhile, class sizes increase to accommodate expanding enrollments, and the “talented eighth” receive far less attention to close reading, individual interpretation, and moral discussion. As with algebra for all, college for all produces a dual loss: the underprepared students do not receive the education they were promised, and the best-prepared students no longer receive a genuine liberal education—except at a small number of highly selective liberal arts colleges such as Swarthmore or Haverford. Scale limits are only one part of the problem. These pressures reshape the professoriate itself. Faculty are trained at elite research universities where college teaching—especially lower-division teaching—receives little or no attention. More training would not necessarily help, any more than additional pedagogical training alone could fix algebra-for-all classrooms. Faculty enter their careers with unrealistic expectations, then respond in one of two ways: they either come to despise their students and seek escape from lower-division teaching, or they feel compassion and attempt to redesign courses to meet students where they are. Either way, something essential is lost. At the same time, faculty face publish-or-perish pressures that could, in principle, be relaxed for those whose primary role is undergraduate teaching—especially in the first two years. Instead, they produce marginal work simply to survive professionally. That work must be placed somewhere, and so journals with low standards proliferate. Some are legitimate outlets for emerging or niche fields made possible by the sheer number of scholars—Environmental Ethics, for example. But many function largely as repositories. Once publication counts lose credibility as indicators of scholarly merit, institutions turn to citation analysis. In many ways, this worsens the problem. Citations correlate poorly with genuine intellectual value; fashionable or trendy work crowds out more substantial contributions. Yet one now sees scholars of apparent seriousness boasting about their citation counts. This is, frankly, bonkers. Finally, evaluation shifts from scholarship to enrollments. Departments are judged by student numbers. History, philosophy, and literature programs are closed as humanities majors decline from roughly 12 percent of students to about 3 percent over three decades. Meanwhile, computer science departments undergo massive hiring sprees—just as AI threatens to eliminate many entry-level coding jobs altogether. The result is a system degraded at every level: curriculum, teaching, scholarship, evaluation, and institutional planning. The enshittification of academic publishing is real—but it is only one symptom of a much larger structural failure.

Len

|

Sunday, November 9, 2025

A Note to a Friend About Retirement

A Note to a Friend About Retirement

In about 2017, I wrote this letter to a friend who, at 70, was contemplating retiring from his academic position. My advice wandered.

Hi, friend.

I should apologize for burdening you with my moodiness. I think I mentioned it in an attempt to excuse my snippy comment about the Joubert quote. But moody I am these days. It has nothing to do with two cancers (prostate & lymphoma) or a heart attack (three stents and a pacemaker); stuff like that is a piece of cake. It’s about having retired too early. I probably had a couple more good years left in me.

Michael Crow took over ASU ten years ago. One of his first moves was to come over to the ed school and “dis-establish” us. He told all the faculty, “make an appointment with the Provost to discuss your place in the university.” Some of our top faculty did so, and left the college for other colleges on campus. Some left for other universities. The less mobile ones stayed put. I looked around for where I might fit and decided to investigate the public policy college. The first thing I saw was that Crow’s academic appointment was there. Not a week earlier I had published an op-ed labeling as “stupid” a proposal to substitute an exam for the last year of high school in Arizona. The proposal, it turned out, was the work of Crow’s wife. I was 70, I was commuting for August – October from Colorado to teach, and I decided, “screw it.” Went into the Provost and said, “I’m done.”

Several of my colleagues who stayed in the college – which survived Crow’s threats, incidentally – are still holding onto their lines. They are approaching their 80s; their best years are behind them; they occupy a line that should be filled by new young PhDs. My feelings about taking up space and sucking a large salary out of the institution also figured in my decision to hang it up when I did.

I did not, however, anticipate the feelings of worthlessness that come with stepping aside. It’s not as though I had not been warned. Lee Cronbach said, “we sink without a ripple.” Berliner told me that when he left Far West Lab decades ago – which he virtually built himself – a letter that was sent to him two months later at the lab was returned “Addressee Unknown.” David, who is as insecure as am I, fights off his feelings of worthlessness by filling his days with ever more professional activity – much of which involves traveling, which I abhor.

We should have seen this coming. We made our way in an institution that valued originality – the discovery of new knowledge. Individuals navigate the system by ignoring what came before and claiming originality. I often read articles and think, “Jeeez, Lee said all this 50 years ago,” or “Scriven said this, and he said it better.” I know this is how things work, and I accept it. But that does nothing to lessen the sense of superfluity.

I published a chapter in a book this year. It appeared almost to the day 57 years after my first academic paper. I had always wanted to make 60 years. I recall Julian Stanley’s – he was my major prof at Madison – amazement in 1964 when Cyril Burt published a paper 60 years after his first publication. Julian admired that. Somehow I internalized that as a personal marker of success. It probably won’t happen. (P.S. I didn’t admire Burt’s ethics, but I had some sympathy for Clarence Karier’s argument that Burt was not a scientist; he was a rhetorician, in the manner of Isocrates.)

I find that I am no longer drawn to the field. I guess I’m a modernist adrift in a post-modern world. Bob Stake once wrote that I serve the modernist’s appetite. He was right, as he often is. (I started working with Bob when I was 19 years old. We were both modernists then, but he grew.) I was raised, academically, in the age of promises. I was told in grad school that psychology and psychometrics had won the war (WW II). All we needed was some grant money and a couple years to build more valid and reliable tests and match them with the right interventions and we would have the cure for poverty and ignorance and illness. No one believes those promises any more, nor should they.

The whole business of public education has turned out to be political battles for control. Everything we thought was important has been trumped by Koch, or DeVoss, or Eli Broad. The charter school industry is raping the public schools. Re-segregation lies behind every new “reform.” Education doesn’t need more research, it needs more political enlightment. My mood has been tainted by how ugly national politics are at the moment.

I teach a small seminar in an EdD program at San Jose State in the summer. I’ve been splitting the course with David for the past 6 years. The conversation is basically about the politics of education. A younger faculty member could probably do a better job. I’m losing my motivation to update my notes.

I suspect that there is not really any warning for you here. I hung on long enough to sense my own obsolescence. My career spanned two radically different eras in the history of the academy. (Stanly Fish recently did a good job of explaining what has happened to the academy.) I suspect that your situation is different. I recall Julian’s glee at coming out of a faculty meeting where he and his like-minded colleagues had changed the PhD core to exempt us mathematicians from courses taught by Merle Borrowman and Don Arnstein. He thought he had done us a huge favor.

And so, I am retired. I spend my days working on my tennis game – nationally ranked players in their 70s play at my court every Monday; I seldom win. I help my granddaughter with her essays for English Comp. I visit on the phone with my daughter every weekday as she drives to work. I attempt to reach Genius on the New York Times anagram puzzle each morning; it’s called Spelling Bee, ironically a misnomer. I plant flowers, and empty the dishwasher. We own two very nice houses. By most standards, we are “well off,” though not so well off as Ernie House who spends three hours a day working on his investments and has done remarkably well. (He was diagnosed last year with Lewy Body Dementia; 5-year prognosis. We talk about it matter-of-factly. Update in 2025: he was misdiagnosed and is doing fine.) But in spite of our good fortune, financial anxiety is a regular part of retirement. A five-year recession would be tough. Eating one’s seed corn is a choice not faced by the still employed.

You, perhaps, honor the pedagogical function of the university more than some others. And you honor the history of your field and avoid the pretense of originality – unless I have fallen victim to some prejudice. That’s what the university should be more about. Most research is a waste of time, whether to do it or to read it – especially in the social sciences and minor disciplines. (If I had it to do over, I’d go into medicine.) At 70, you have several good years ahead of you, and your perspective on faculty politics is one that is badly needed.

Wednesday, May 8, 2024

Class Notes: Relationship of Education Policy to Education Research and Social Science

2005

Education Policy to Education Research and Social Science

These notes have two purposes: to disabuse naïve conceptions of the role of research in policy development (if you happen to have any, and perhaps you don’t); to illustrate and conceptualize the actual relationship of social science and educational research to policy.

Since we are working in a graduate program that has training for a career of scholarship and research as its goal, and since it is education policy that is the subject of this scholarly attention, it should not be unexpected that at some point we would ask how these two things come together. There is much more to policy making than doing or finding research that tells one how to make policy—far more. In fact, basing policy on research results—or appearing to do so—is a relatively recent phenomenon. Policies are formulated and adopted for many more reasons than what educational and social science research give. Policies may arise from the mere continuation of traditional ways of doing things; they may come about to satisfy a group of constituents to whom one owes one’s elected or appointed position. They may grow out of the mind of an authority in a way that is completely incomprehensible, even to the leader. But increasingly, policies are being viewed by opinion makers, journalists, intellectuals of various sorts, and even a broader public as not legitimate or not deserving of respect or lacking authority unless they are linked somehow to science. In what follows immediately, I have written down a few ideas on this subject. They may orient your thinking in a way that makes the articles you will read more meaningful.

In brief, I believe that research is far too limited in its scope of application to dictate policy formulation. Policy options arise in large part from the self interests of particular groups. No matter how broad and solid the research base, opponents of a policy recommendation that seems to arise from a particular body of research evidence will find ground on which to stand where the evidence is shaky or non-existent, and from there they will oppose the recommendation. If “whole language” instruction appears from all available research to result in greater interest in reading and more elective reading in adulthood, opponents will argue that children so taught will be disadvantaged in learning to read a second language or that no solid measures of comprehension were taken thirty years later in adulthood.

So what is the relationship of research to policy? The answer entails distinctions between such things as codified policy (laws, rules & regulations, by-laws and the like) and policy-in-practice (regularities in the behavior or people and organizations that rise to a particular level of importance—usually because they entail some conflict of interests or values--where we care to talk about them as “policy”).

Policy-in-practice is often shaped by research in ways that are characterized by the following:

- Long lag times between the “scientific discovery” and its effect on practice;

- Transmission of “scientific truths” through popular media, folk knowledge, and personal contact;

- Largely unconscious or unacknowledged acceptance of such truths in the forms of common sense and widely shared metaphors.

With respect to codified policy, research serves functions of legitimating choices, which it can do on account of its respected place in modern affairs. Research is used rhetorically in policy debates. Not to advance research in support of one’s position is often tantamount to conceding the debate—as if the prosecution put its expert on the stand and the defense had no expert. In rare instances so recondite and far from the public’s ability to understand and in instances where a small group of individuals exercises strict control (“contexts of command” as Cronbach and his associates spoke of them) or in places where the stakes are so low that almost no one cares about them, research may actually determine policy. But even these contexts are surprisingly and increasingly rare. (Research evidence is marshaled against such seemingly “scientific” practices as vaccinating against flu viruses, preventing forest fires, the use of antibiotics, tonsillectomies, and cutting fat out of your diet.)

For the most part, research in education functions in a “context of accommodation” where interests conflict and the stakes are relatively high. There, research virtually never determines policy. Instead, it is used in the adversarial political process to advance one’s cause. Ultimately, the policy will be determined through democratic procedures (direct or representative) because no other way of resolving the policy conflicts works as well. Votes are taken as a way of forcing action and tying off inquiry, which never ends. (As an observer once remarked concerning trials, they end because people get tired of talking; if they were all conducted in writing—as research is—they would never end.)

Research that is advanced in support of codified policy formation is at the other end of the scale from the kind of scientific discoveries earlier referred to that revolutionize prevailing views. They resemble what Kuhn called “normal science”—small investigations that function entirely within the boundaries of well-established knowledge and serve to reinforce prevailing views. Such work is properly regarded less as “scientific” inquiry than as other things: rhetoric, testimony for the importance of a set of ideas or concerns, existence demonstrations (“This is possible.”). [Having written this paragraph in the first draft, now, in the second draft, I have no idea what I was driving at.]

Research does not determine policy in the areas in which we are interested—human services, let’s call them—in large part because such research is open ended, i.e., its concerns are virtually unlimited. Such research lacks a “paradigm” in the strict Kuhnian sense: not in the sense in which the word has come to mean virtually everything and nothing in popular pseudo-intellectual speech, but “paradigm” in the sense that Kuhn used it, meaning having an agreed upon set of concepts, problems, measures and methods. On one of the rare occasions when Kuhn was asked whether educational research had a paradigm, or any recent “paradigm shifts,” he seemed barely to understand the question. In writing (Structure of Scientific Revolutions), he opined that even psychology was in a “pre-paradigm” state. Without boundaries, a body of research supporting policy A can always be said to be missing elements X, Y and Z, that just happen at the moment to be of critical importance to those who dislike the implications of whatever research has been advanced in support of policy A.

This lack of a “paradigm” for social scientific and educational research not only makes them suffer from an inability to limit the number of considerations that can be claimed to bear on any one problem, it also means that there are no guidelines on what problems the research will address. The questions that research addresses do not come from (are not suggested by) theories or conceptual frameworks themselves, but rather reflect the interests of the persons who choose them. For example, administrators in one school district choose to study “the culture of absenteeism” among teachers; in so doing they ignore the possibility that sabbaticals for teachers might be a worthy and productive topic for research. The politics of this situation are almost too obvious to mention.

What are some functions of research in the process of policy formation?

a) Researchers give testimony in the legal sense in defending a particular position in adversarial proceedings, wherever these occur. (There even exists an online journal named Scientific Testimony, http://www.scientific.org/.) Both sides of a policy debate will parade their experts, giving conflicting testimony based on their research. But not to appear and testify is to give up the game to the opposition.

b) Researchers educate (or at least, influence) decision makers by giving them concepts and ways of thinking that are uncommon outside the circles of social scientists who invent and elaborate them. An example: in the early days of the Elementary and Secondary Education Act of 1965 (the first significant program of federal aid to education), the Congressional hearings for reauthorization of the law were organized around the appearance of various special interest groups: the NEA, the AFT, the vocational education lobby, and so forth. Paul Hill, now a education policy professor at the University of Washington, was a highly placed researcher/scholar in the National Institute of Education in the early 1970s. His primary responsibility was for evaluation of Title I of ESEA, the Compensatory Education portion of the law. Through negotiating and prodding and arguing, he succeeded in having the Congressional hearings for reauthorization organized around a set of topics related to compensatory education that reflected the research and evaluation community’s view of what was important: class size, early childhood developmental concerns, teacher training, and the like. No one can point to a study or a piece of research that affected Congress’s decisions about compensatory education; but for a time, the decision makers talked and thought about the problems in ways similar to how the researchers thought about them.

c) Researchers give testimony (in the “religious” sense of a public acknowledgment or witnessing) to the importance of various ideas or concepts simply by merit of involving them in their investigations. (“Maybe this is important, or else why would all those pinheads be talking about it?”)

Tuesday, August 29, 2023

Still, Done Too Soon

Bob Stake hired me at Urbana in 1965. Bob was editing the AERA monograph series on Curriculum Evaluation. He shared a copy of a manuscript he was considering. It was “The Methodology of Evaluation.” Up to that point, evaluation for educators was about nothing much more than behavioral objectives and paper-&-pencil tests. Finally though, someone was talking sense about something I could get excited about. At that point I only heard rumors, some true, some not, about the author: he was a philosopher; he was Australian; his parents were wealthy sheep ranchers; he was moving from Indiana to San Francisco; he told a realtor that he wanted an expensive house with only one bedroom.

I didn’t meet Michael in person until about 1968. I had moved to Boulder, and he was attending a board meeting of the Social Sciences Education Consortium. He had helped start SSEC back in Indiana in 1963, and it had moved to Colorado in the meantime. I had nothing to do with SSEC but somehow was invited to the dinner at the Red Lion Inn. I knew Michael would be there, and I was eager to see this person in the flesh. He arrived and a dinner of a dozen or so commenced. As people were seated, Michael began to sing in Latin a portion of some Catholic mass. I had no idea what it was about, but it was clear that he was amused by the reaction of his companions. At one point in the table talk, someone congratulated an economist in attendance on the birth of his 7th child. “A true test of masculinity,” someone loudly remarked. “Hardly, in an age of contraceptives,” said Michael soto voce. It was 1968 after all.

We next met in 1969. I had the contract from the US Office of Education – it was not a Department yet – to analyze and report the data from the first survey of ESEA Title I, money for the disadvantaged. The contract was large as was my “staff.” I was scared to death. I called in consultants: Bob Stake, Dick Jaeger; but Michael was first. He calmed me down and gave me a plan. I was grateful.

We met again in 1972. It was at AERA in New York. He invited me up to the room to meet someone. It was Mary Anne. She was young; she was extraordinarily beautiful. I was speechless. Those who knew Michael only recently – say, post 1990 – may not have known how handsome and charming he was.

I saw Michael rarely post-1980. His interest in evaluation became his principal focus and my interests wandered elsewhere. One day when I found myself analyzing the results of other people’s analyses, I thought of Michael and “meta-evaluation” (literally the evaluation of evaluations) and decided to call what I was doing, meta-analysis. Very recently, I wrote him and told him that he was responsible for the term “meta-analysis.” I was feeling sorry for him; it was the only thing I could think to say that might make him feel a bit better. I probably overestimated.

In the late 1990s, Sandy and I were in San Francisco and Michael invited us to Inverness for lunch. Imbedded in memory are a half dozen hummingbird feeders, shellfish salad, and the library – or should I say, both libraries. When the house burned down and virtually everything was lost, I remembered the library. When Michael’s Primary Philosophy was first published in 1966, I bought what turned out to be a first printing. Unknown to Michael and many others, apparently, there was an interesting typo. Each chapter’s first page was its number and its title, e.g., III ART. However, on page 87, there was only the chapter number IV. The chapter name was missing: GOD. After the house burned down and the libraries were lost, I sent him my copy of Primary Philosophy; "Keep it." He was amused and grateful.

There was a meeting of Stufflebeam’s people in Kalamazoo around 2000 perhaps. Michael was in charge. I was asked to speak. I can barely remember what I said; maybe something about personally and privately held values versus values that are publicly negotiated. I could tell that Michael was not impressed. It hardly mattered. He invited Sandy and me to see his house by a lake. There were traces that his health was not good.

I can’t let go of the notion that there are some things inside each of us that drive us and give us a sense of right-and-wrong and good-better-best that one might as well call personal values. They are almost like Freud’s super-ego, and they are acquired in the same way, by identification with an object (person) loved or feared. I know I have a very personal sense of when I am doing something right or well. A part of that sense is Michael.

Thursday, July 21, 2022

A Memory

|

My Last Day on Earth as a "Quantoid"

Gene V Glass I was taught early in my professional career that personal recollections were not proper stuff for academic discourse. The teacher was my graduate adviser Julian Stanley, and the occasion was the 1963 Annual Meeting of the American Educational Research Association. Walter Cook, of the University of Minnesota, had finished delivering his AERA presidential address. Cook had a few things to say about education, but he had used the opportunity to thank a number of personal friends for their contribution to his life and career, including Nate Gage and Nate's wife; he had spoken of family picnics with the Gages and other professional friends. Afterwards, Julian and Ellis Page and a few of us graduate students were huddled in a cocktail party listening to Julian's post mortem of the presidential remarks. He made it clear that such personal reminiscences on such an occasion were out of place, not to be indulged in. The lesson was clear, but I have been unable to desist from indulging my own predilection for personal memories in professional presentations. But that early lesson has not been forgotten. It remains as a tug on conscience from a hidden teacher, a twinge that says "You should not be doing this," whenever I transgress. Bob Stake and I and Tom Green and Ralph Tyler (to name only four) come from a tiny quadrilateral no more than 30 miles on any side in Southeastern Nebraska, a fertile crescent (with a strong gradient trailing off to the northeast) that reaches from Adams to Bethany to South Lincoln to Crete, a mesopotamia between the Nemaha and the Blue Rivers that had no more than 100,000 population before WW II. I met Ralph Tyler only once or twice, and both times it was far from Nebraska. Tom Green and I have a relationship conducted entirely by email; we have never met face-to-face. But Bob Stake and I go back a long way. On a warm autumn afternoon in 1960, I was walking across campus at the University of Nebraska headed for Love Library and, as it turned out, walking by chance into my own future. I bumped into Virgina Hubka, a young woman of 19 at the time, with whom I had grown up since the age of 10 or 11. We seldom saw each other on campus. She was an Education major, and I was studying math and German with prospects of becoming a foreign language teacher in a small town in Nebraska. I had been married for two years at that time and felt a chronic need of money that was being met by janitorial work. Ginny told me of a job for a computer programmer that had just been advertised in the Ed Psych Department where she worked part time as a typist. A new faculty member—just two years out of Princeton with a shiny new PhD in Psychometrics—by the name of Bob Stake had received a government grant to do research. I looked up Stake and found a young man scarcely ten years my senior with a remarkably athletic looking body for a professor. He was willing to hire a complete stranger as a computer programmer on his project, though the applicant admitted that he had never seen a computer (few had in those days). The project was a monte carlo simulation of sampling distributions of latent roots of the B* matrix in multi-dimensional scaling—which may shock latter-day admirers of Bob's qualitative contributions. Stake was then a confirmed "quantoid" (n., devotee of quantitative methods, statistics geek). I took a workshop and learned to program a Burroughs 205 computer (competitor with the IBM 650); the 205 took up an entire floor of Nebraska Hall, which had to have special air conditioning installed to accommodate the heat generated by the behemoth. My job was to take randomly generated judgmental data matrices and convert them into a matrix of cosines of angles of separation among vectors representing stimulus objects. It took me six months to create and test the program; on today's equipment, it would require a few hours. Bob took over the resulting matrix and extracted latent roots to be compiled into empirical sampling distributions. The work was in the tradition of metric scaling invented by Thurstone and generalized to the multidimensional case by Richardson and Torgerson and others; it was heady stuff. I was allowed to operate the computer in the middle of the night, bringing it up and shutting it down by myself. Bob found an office for me to share with a couple of graduate students in Ed Psych. I couldn't believe my good luck; from scrubbing floors to programming computers almost overnight. I can recall virtually every detail of those two years I spent working for Bob, first on the MDS project, then on a few other research projects he was conducting (even creating Skinnerian-type programmed instruction for a study of learner activity; my assignment was to program instruction in the Dewey Decimal system). Stake was an attractive and fascinating figure to a young man who had never in his 20 years on earth traveled farther than 100 miles from his birthplace. He drove a Chevy station wagon, dusty rose and silver. He lived on the south side of Lincoln, a universe away from the lower-middle class neighborhoods of my side of town. He had a beautiful wife and two quiet, intense young boys who hung around his office on Saturdays silently playing games with paper and pencil. In the summer of 1961, I was invited to the Stake's house for a barbecue. Several graduate students were there (Chris Buethe, Jim Beaird, Doug Sjogren). The backyard grass was long and needed mowing; in the middle of the yard was a huge letter "S" carved by a lawn mower. I imagined Bernadine having said once too often, "Bob, would you please mow the backyard?" (Bob's children tell me that he was accustomed to mowing mazes in the yard and inventing games for them that involved playing tag without leaving the paths.) That summer, Bob invited me to drive with him to New York City to attend the ETS Invitational Testing Conference. Bob's mother would go with us. Mrs. Stake was a pillar of the small community, Adams, 25 miles south of Lincoln where Bob was born and raised. She regularly spoke at auxiliary meetings and other occasions about the United Nations, then only 15 years old. The trip to New York would give her a chance to renew her experiences and pick up more literature for her talks. Taking me along as a spare driver on a 3,500 mile car trip may not have been a completely selfless act on Bob's part, but going out of the way to visit the University of Wisconsin so that I could meet Julian Stanley and learn about graduate school definitely was generous. Bob had been corresponding with Julian since the Spring of 1961. The latter had written his colleagues around the country urging them to test promising young students of their acquaintance and send him any information about high scores. In those pre-GRE days, the Miller Analogies Test and the Doppelt Mathematical Reasoning Test were the instruments of choice. Julian was eager to discover young, high scorers and accelerate them through a doctoral program, thus preventing for them his own misfortune of having wasted four of his best years in an ammunition dump in North Africa during WW II—and presaging his later efforts to identify math prodigies in middle school and accelerate them through college. Bob had created his own mental ability test, named with the clever pun QED, the Quantitative Evaluative Device. Bob asked me to take all three tests; I loved taking them. He sent the scores to Julian, and subsequently the stop in Madison was arranged. Bob had made it clear that I should not attend graduate school in Lincoln. We drove out of Lincoln—the professor, the bumpkin and Adams's Ambassador to the U.N.—on October 27, 1961. Our first stop was Platteville, Wisconsin, where we spent the night with Bill Jensen, a former student of Bob's from Nebraska. Throughout the trip we were never far from Bob's former students who seemed to feel privileged to host his retinue. On day two, we met Julian in Madison and had lunch at the Union beside Lake Mendota with him and Les McLean and Dave Wiley. The company was intimidating; I was certain that I did not fit in and that Lincoln was the only graduate school I was fit for. We spent the third night sleeping in the attic apartment of Jim Beaird, whose dissertation that spring was a piece of the Stake MDS project; he had just started his first academic job at the University of Toledo. The fourth day took us through the Allegheny Mountains in late October; the oak forests were yellow, orange and crimson, so unlike my native savanna. We shared the driving. Bob drove through rural New Jersey searching for the small community where his brother Don lived; he had arranged to drop off his mother there. The maze was negotiated without the aid of road maps or other prostheses; indeed, none was consulted during the entire ten days. That night was spent in Princeton. Fred Kling, a former ETS Princeton Psychometric Fellow at Princeton with Bob, and his wife entertained us with a spaghetti dinner by candlelight. It was the first time in my life I had seen candles on a dinner table other than during a power outage, as it was also the first time I had tasted spaghetti not out of a can. . The next day we called on Harold Gulliksen at his home. Gulliksen had been Bob's adviser at Princeton. We were greeted by his wife, who showed us to a small room outside his home office. We waited a few minutes while he disengaged from some strenuous mental occupation. Gulliksen swept into the room wearing white shirt and tie; he shook my hand when introduced; he focused on Bob's MDS research. The audience was over within fifteen minutes. I didn't want to return to Princeton. We drove out to the ETS campus. Bob may have been gone for three years, but he was obviously not forgotten. Secretaries in particular seemed happy to see him. Bob was looking for Sam Messick. I was overwhelmed to see that these citations—(Abelson and Messick, 1958)—were actual persons, not like anything I had ever seen in Nebraska of course, but actual living, breathing human beings in whose presence one could remain for several minutes without something disastrous happening. Bob reported briefly on our MDS project to Messick. Sam had a manuscript in front of him on his desk. "Well, it may be beside the point," Messick replied to Bob's description of our findings. He held up the manuscript. It was a pre-publication draft of Roger Shepard's "Analysis of Proximities," which was to revolutionize multidimensional scaling and render our monte carlo study obsolete. It was October 30, 1961. It was Bob Stake's last day on earth as a quantoid. The ETS Invitational Testing Conference was held in the Roosevelt Hotel in Manhattan. We bunked with Hans Steffan in East Orange and took the tube to Manhattan. Hans had been another Stake student; he was a native German and I took the opportunity to practice my textbook Deutsch. I will spare the reader a 21-year-old Nebraska boy's impressions of Manhattan, all too shopworn to bear repeating. The Conference was filled with more walking citations: Bob Ebel, Ledyard Tucker, E. F. Lindquist, Ted Cureton, famous name after famous name. (Ten years later, I had the honor of chairing the ETS Conference, which gave me the opportunity to pick the roster of speakers along with ETS staff. I asked Bob to present his ideas on assessment; he gave a talk about National Assessment that featured a short film that he had made. People remarked that they were not certain that he was being "serious." His predictions about NAEP were remarkably prescient.) We picked up Bob's mother in Harrisburg, Pennsylvania, for some reason now forgotten. While we had listened to papers, she had invaded and taken over the U.N. We pointed the station wagon west; we made one stop in Toledo to sleep for a few hours. I did more than my share behind the wheel. I was extremely tired, having not slept well in New York. Bob and I usually slept in the same double bed on this trip and I was too worried about committing some gross act in my sleep to rest comfortably. I had a hard time staying awake during my stints at the wheel, but I would not betray weakness by asking for relief. I nearly fell asleep several times through Ohio, risking snuffing out two promising academic careers and breaking Adams, Nebraska's only diplomatic tie to the United Nations. To help relieve the boredom of the long return trip, Bob and I played a word game that he had learned or invented. It was called "Ghost." Player one thinks of a five-letter word, say "spice." Player two guesses a five-letter word to start; suppose I guessed "steam." Player one superimposes, in his mind, the target word "spice" and my first guess "steam" and sees that one letter coincides—the "s." Since one letter is an odd number of letters, he replies "odd." If no letters coincide he says "even." If I had been very lucky—actually unlucky—and first guessed "slice," player one would reply "even" because four letters coincide. (This would actually have been an unlucky start since one reasonably assumes that the initial response "even" means that zero letters coincide. I think that games of this heinous intricacy are not unknown to Stake children.) Through a process of guessing words and deducing coincidences from "odd" and "even" responses, player two eventually discovers player one's word. It is a difficult game and it can consume hundreds of miles on the road. Several rounds of the game took us through Ohio, Indiana, Illinois. Somewhere around the Quad Cities, Bob played his trump card. He was thinking of a word that resisted all my most assiduous attempts at deciphering. Finally, outside Omaha I conceded defeat. His word was "ouija," as in the board. Do we take this incident as in some way a measure of this man? By the time I arrived in Lincoln, a Western Union Telegram from Julian was waiting. I had never before received a telegram—or known anyone who had. I was flattered; I was hooked. Three months later, January 1962, I left Lincoln, Stake and everything I had known my entire life for graduate school. Bob and I corresponded regularly during the ensuing years. He wrote to tell me that he had taken a job at Urbana. I told him I was learning all that was known about statistics. He wrote several times during his summer, 1964, at Stanford in the institute that Lee Cronbach and Richard Atkinson conducted. Clearly it was a transforming experience for him. I was jealous. When I finished my degree in 1965, Bob had engineered a position for me in CIRCE at Univ. of Illinois. I was there when Bob wrote his "Countenance" paper; I pretended to understand it. I learned that there was a world beyond statistics; Bob had undergone enormous changes intellectually since our MDS days. I admired them, even as I recognized my own inability to follow. I spent two years at CIRCE; I think I felt the need to shine my own light away from the long shadows. I picked a place where I thought I might shine: Colorado. Bob and I saw very little of each other from 1967 on. In the early 1970s, I invited him to teach summer school at Boulder. He gave a seminar on evaluation and converted all my graduate students into Stake-ians. But I saw little of him that summer. We didn't connect again until 1978. When the year 1978 arrived, I was at the absolute height of my powers as a quantoid. My book on time-series experiment analysis was being reviewed by generous souls who called it a "watershed." Meta- analysis was raging through the social and behavioral sciences. I had nearly completed the class-size meta-analysis. The Hastings Symposium, on the occasion of Tom Hastings's retirement as head of CIRCE, was happening in Urbana in January. I attended. Lee Cronbach delivered a brilliant paper that gradually metamorphosed into his classic Designing Evaluations of Educational and Social Programs. Lee argued that the place of controlled experiments in educational evaluation is much less than we had once imagined. "External validity," if we must call it that, is far more important than "internal validity," which is after all not just an impossibility but a triviality. Experimental validity can not be reduced to a catechism. Well, this cut to the heart of my quantoid ideology, and I remember rising during the discussion of Lee's paper to remind him that controlled, randomized experiments worked perfectly well in clinical drug trials. He thanked me for divulging this remarkable piece of intelligence. That summer I visited Eva Baker's Center for the Study of Evaluation at UCLA for eight weeks. Bob came for two weeks at Eva's invitation. One day he dropped a sheet of paper on my desk that contained only these words:

I was a quantoid, and "what I do best" was peaking. I gave a colloquium at Eva's center on the class size meta-analysis in mid- June. People were amazed. Jim Popham asked for the paper to inaugurate his new journal Educational Evaluation and Policy Analysis. He was welcome to it. June 30, 1978, dawned inauspiciously; I had no warning that it would be my last day on earth as a quantoid. Bob was to speak at a colloquium at the Center on whatever it was that was on his mind at that moment. Ernie House was visiting from Urbana. I was looking forward to the talk, because Bob never gave a dull lecture in his life. That day he talked about portrayal, complexity, understanding; qualities that are not yet nor may never be quantities; the ineffable (Bob has never been a big fan of the "effable"). I listened with respect and admiration, but I listened as one might listen to stories about strange foreign lands, about something that was interesting but that bore no relationship to one's own life. Near the end when questions were being asked I sought to clarify the boundaries that contained Bob's curious thoughts. I asked, "Just to clarify, Bob, between an experimentalist evaluator and a school person with intimate knowledge of the program in question, who would you trust to produce the most reliable knowledge of the program's efficacy?" I sat back confident that I had shown Bob his proper place in evaluation—that he couldn't really claim to assess impact, efficacy, cause-and-effect with his case-study, qualitative methods—and waited for his response, which came with uncharacteristic alacrity. "The school person," he said. I was stunned. Here was a person I respected without qualification whose intelligence I had long admired who was seeing the world far differently from how I saw it. Bob and Ernie and I stayed long after the colloquium arguing about Bob's answer, rather Ernie and I argued vociferously while Bob occasionally interjected a word or sentence of clarification. I insisted that causes could only be known (discovered, found, verified) by randomized, controlled experiments with double-blinding and followed up with statistical significance tests. Ernie and Bob argued that even if you could bring off such an improbable event as the experiment I described, you still wouldn't know what caused a desirable outcome in a particular venue. I couldn't believe what they were saying; I heard it, but I thought they were playing Jesuitical games with words. Was this Bob's ghost game again? Eventually, after at least an hour's heated discussion I started to see Bob and Ernie's point. Knowledge of a "cause" in education is not something that automatically results from one of my ideal experiments. Even if my experiment could produce the "cause" of a wonderful educational program, it would remain for those who would share knowledge of that cause with others to describe it to them, or act it out while they watched , or somehow communicate the actions, conditions and circumstances that constitute the "cause" that produces the desired effect. They—Bob and Ernie—saw the experimenter as not trained, not capable of the most important step in the chain: conveying to others a sense of what works and how to bring it about. "Knowing" what caused the success is easier, they believed, than "portraying" to others a sense for what is known. I can not tell you, dear reader, why I was at that moment prepared to accept their belief and their arguments, but I was. What they said in that hour after Bob's colloquium suddenly struck me as true. And in the weeks and months after that exchange in Moore Hall at UCLA, I came to believe what they believed about studying education and evaluating schools: many people can know causes; few experiments can clarify causal claims; telling others what we know is the harder part. It was my last day on earth as a quantoid. In the early 1970s, Bob introduced me to the writings of another son of Lincoln, Loren Eiseley, the anthropologist, academic and author, whom Wystan H. Auden once named as one of the leading poets of his generation. Eiseley wrote often about his experiences in the classroom; he wrote of "hidden teachers," who touch our lives and never leave us, who speak softly at the back of our minds, who say "Do this; don’t do that." In his book The Invisible Pyramid, Eiseley wrote of "The Last Magician." "Every man in his youth—and who is to say when youth is ended?—meets for the last time a magician, a man who made him what he is finally to be." (p. 137) For Eiseley, that last magician is no secret to those who have read his autobiography, All the Strange Hours; he was Frank Speck, an anthropology professor at the University of Pennsylvania who was Eiseley's adviser, then colleague, and to whose endowed chair Eiseley succeeded upon Speck's retirement. (It is a curious coincidence that all Freudians will love that Eiseley's first published book was a biography of Fancis Bacon entitled The Man Who Saw Through Time; Francis Bacon and Frank Speck are English and German translations of each other.) Eiseley described his encounter with the ghost of his last magician: "I was fifty years old when my youth ended, and it was, of all unlikely places, within that great unwieldy structure built to last forever and then hastily to be torn down—the Pennsylvania Station in New York. I had come in through a side doorway and was slowly descending a great staircase ina slanting shaft of afternoon sunlight. Distantly I became aware of a man loitering at the bottom of the steps, as though awaiting me there. As I descended he swung about and began climbing toward me.Eiseley had seen a ghost. His mind fixed on the terror he felt at encountering Speck's ghost. They had been friends. Why had he felt afraid? "On the slow train running homeward the answer came. I had been away for ten years from the forest. I had had no messages from its depths.... I had been immersed in the postwar administrative life of a growing university. But all the time some accusing spirit, the familiar of the last wood-struck magician, had lingered in my brain. Finally exteriorized, he had stridden up the stair to confront me in the autumn light. Whether he had been imposed in some fashion upon a convenient facsimile or was a genuine illusion was of little importance compared to the message he had brought. I had starved and betrayed myself. It was this that had brought the terror. For the first time in years I left my office in midafternoon and sought the sleeping silence of a nearby cemetery. I was as pale and drained as the Indian pipe plants without chlorophyll that rise after rains on the forest floor. It was time for a change. I wrote a letter and studied timetables. I was returning to the land that bore me." (P. 139)Whenever I am at my worst —- rash, hostile, refusing to listen, unwilling even to try to understand -- something tugs at me from somewhere at the back of consciousness, asking me to be better than that, to be more like this person or that person I admire. Bob Stake and I are opposites on most dimensions that I can imagine. I form judgments prematurely; he is slow to judge. I am impetuous; he is reflective. I talk too much; perhaps he talks not enough. I change my persona every decade; his seemingly never changes. And yet, Bob has always been for me a hidden teacher. Note This is the text of remarks delivered in part on the occasion of a symposium honoring the retirement of Robert E. Stake, University of Illinois—UC. May 9, 1998 in Urbana, Illinois. References

Eiseley, Loren (1970). The Invisible Pyramid. New York: Scribner. |

Saturday, July 2, 2022

Meta-analysis at 25: A Personal History

January 2000

A Personal History

Gene V Glass

Arizona State University

Email: glass@asu.edu

It has been nearly 25 years since meta-analysis, under that name and in its current guise made its first appearance. I wish to avoid the weary references to the new century or millenium—depending on how apocalyptic you're feeling (besides, it's 5759 on my calendar anyway)—and simply point out that meta-analysis is at the age when most things graduate from college, so it's not too soon to ask what accounting can be made of it. I have refrained from publishing anything on the topic of the methods of meta-analysis since about 1980 out of a reluctance to lay some heavy hand on other people's enthusiasms and a wish to hide my cynicism from public view. Others have eagerly advanced its development and I'll get to their contributions shortly (Cooper & Hedges, 1994; Hedges and Olkin, 1985; Hunter, Schmidt and Jackson, 1982).

Autobiography may be the truest, most honest narrative, even if it risks self-aggrandizement, or worse, self-deception. Forgive me if I risk the latter for the sake of the former. For some reason it is increasingly difficult these days to speak in any other way.

In the span of this rather conventional paper, I wish to review the brief history of the form of quantitative research synthesis that is now generally known as "meta-analysis" (though I can't possibly recount this history as well as has Morton Hunt (1997) in his new book How Science Takes Stock: The story of Meta-Analysis), tell where it came from, why it happened when it did, what was wrong with it and what remains to be done to make the findings of research in the social and behavioral sciences more understandable and useful.

In 25 years, meta-analysis has grown from an unheard of preoccupation of a very small group of statisticians working on problems of research integration in education and psychotherapy to a minor academic industry, as well as a commercial endeavor (see http://epidemiology.com/ and http://members.tripod.com/~Consulting_Unlimited/, for example). A keyword web search—the contemporary measure of visibility and impact—(Excite, January 28, 2000) on the word "meta-analysis" brings 2,200 "hits" of varying degrees of relevance, of course. About 25% of the articles in the Psychological Bulletin in the past several years have the term "meta-analysis" in the title. Its popularity in the social sciences and education is nothing compared to its influence in medicine, where literally hundreds of meta-analyses have been published in the past 20 years. (In fact, my internist quotes findings of what he identifies as published meta-analyses during my physical exams.) An ERIC search shows well over 1,500 articles on meta-analyses written since 1975.

Surely it is true that as far as meta-analysis is concerned, necessity was the mother of invention, and if it hadn't been invented—so to speak—in the early 1970s it would have been invented soon thereafter since the volume of research in many fields was growing at such a rate that traditional narrative approaches to summarizing and integrating research were beginning to break down. But still, the combination of circumstances that brought about meta-analysis in about 1975 may itself be interesting and revealing. There were three circumstances that influenced me.

The first was personal. I left the University of Wisconsin in 1965 with a brand new PhD in psychometrics and statistics and a major league neurosis—years in the making—that was increasingly making my life miserable. Luckily, I found my way into psychotherapy that year while on the faculty of the University of Illinois and never left it until eight years later while teaching at the University of Colorado. I was so impressed with the power of psychotherapy as a means of changing my life and making it better that by 1970 I was studying clinical psychology (with the help of a good friend and colleague Vic Raimy at Boulder) and looking for opportunities to gain experience doing therapy.

In spite of my personal enthusiasm for psychotherapy, the weight of academic opinion at that time derived from Hans Eysenck's frequent and tendentious reviews of the psychotherapy outcome research that proclaimed psychotherapy as worthless—a mere placebo, if that. I found this conclusion personally threatening—it called into question not only the preoccupation of about a decade of my life but my scholarly judgment (and the wisdom of having dropped a fair chunk of change) as well. I read Eysenck's literature reviews and was impressed primarily with their arbitrariness, idiosyncrasy and high-handed dismissiveness. I wanted to take on Eysenck and show that he was wrong: psychotherapy does change lives and make them better.

The second circumstance that prompted meta-analysis to come out when it did had to do with an obligation to give a speech. In 1974, I was elected President of the American Educational Research Association, in a peculiar miscarriage of the democratic process. This position is largely an honorific title that involves little more than chairing a few Association Council meetings and delivering a "presidential address" at the Annual Meeting. It's the "presidential address" that is the problem. No one I know who has served as AERA President really feels that they deserved the honor; the number of more worthy scholars passed over not only exceeds the number of recipients of the honor by several times, but as a group they probably outshine the few who were honored. Consequently, the need to prove one's worthiness to oneself and one's colleagues is nearly overwhelming, and the most public occasion on which to do it is the Presidential address, where one is assured of an audience of 1,500 or so of the world's top educational researchers. Not a few of my predecessors and contemporaries have cracked under this pressure and succumbed to the temptation to spin out grandiose fantasies about how educational research can become infallible or omnipotent, or about how government at national and world levels must be rebuilt to conform to the dictates of educational researchers. And so I approached the middle of the 1970s knowing that by April 1976 I was expected to release some bombast on the world that proved my worthiness for the AERA Presidency, and knowing that most such speeches were embarrassments spun out of feelings of intimidation and unworthiness. (A man named Richard Krech, I believe, won my undying respect when I was still in graduate school; having been distinguished by the American Psychological Association in the 1960s with one of its highest research awards, Krech, a professor at Berkeley, informed the Association that he was honored, but that he had nothing particularly new to report to the organization at the obligatory annual convention address, but if in the future he did have anything worth saying, they would hear it first.)

The third set of circumstances that joined my wish to annihilate Eysenck and prove that psychotherapy really works and my need to make a big splash with my Presidential Address was that my training under the likes of Julian Stanley, Chester Harris, Henry Kaiser and George E. P. Box at Wisconsin in statistics and experimental design had left me with a set of doubts and questions about how we were advancing the empirical agenda in educational research. In particular, I had learned to be very skeptical of statistical significance testing; I had learned that all research was imperfect in one respect or another (or, in other words, there are no "perfectly valid" studies nor any line that demarcates "valid" from "invalid" studies); and third, I was beginning to question a taken-for-granted assumption of our work that we progress toward truth by doing what everyone commonly refers to as "studies." (I know that these are complex issues that need to be thoroughly examined to be accurately communicated, and I shall try to return to them.) I recall two publications from graduate school days that impressed me considerably. One was a curve relating serial position of a list of items to be memorized to probability of correct recall that Benton Underwood (1957) had synthesized from a dozen or more published memory experiments. The other was a Psychological Bulletin article by Sandy Astin on the effects of glutamic acid on mental performance (whose results presaged a meta-analysis of the Feingold diet research 30 years later in that poorly controlled experiments showed benefits and well controlled experiments did not).

Permit me to say just a word or two about each of these studies because they very much influenced my thinking about how we should "review" research. Underwood had combined the findings of 16 experiments on serial learning to demonstrate a consistent geometrically decreasing curve describing the declining probability of correct recall as a function of number of previously memorized items, thus giving strong weight to an interference explanation of recall errors. What was interesting about Underwood's curve was that it was an amalgamation of studies that had different lengths of lists and different items to be recalled (nonsense syllables, baseball teams, colors and the like).

Astin's Psychological Bulletin review had attracted my attention in another respect. Glutamic acid—it will now scarcely be remembered—was a discovery of the 1950s that putatively increased the ability of tissue to absorb oxygen. Reasoning with the primitive constructs of the time, researchers hypothesized that more oxygen to the brain would produce more intelligent behavior. (It is not known what amount of oxygen was reaching the brains of the scientists proposing this hypothesis.) A series of experiments in the 1950s and 1960s tested glutamic acid against "control groups" and by 1961, Astin was able to array these findings in a crosstabulation that showed that the chances of finding a significant effect for glutamic acid were related (according to a chi-square test) to the presence or absence of various controls in the experiment; placebos and blinding of assessors, for example, were associated with no significant effect of the acid. As irrelevant as the chi-square test now seems, at the time I saw it done, it was revelatory to see "studies" being treated as data points in a statistical analysis. (In 1967, I attempted a similar approach while reviewing the experimental evidence on the Doman-Delacato pattern therapy. Glass and Robbins, 1967)

At about the same time I was reading Underwood and Astin, I certainly must have read Ben Bloom's Stability of Human Characteristics (1963), but its aggregated graphs of correlation coefficients made no impression on me, because it was many years after work to be described below that I noticed a similarlity between his approach and meta-analysis. Perhaps the connections were not made because Bloom dealt with variables such as age, weight, height, IQ and the like where the problems of dissimilarity of variables did not force one to worry about the kinds of problem that lie at the heart of meta-analysis.

If precedence is of any concern, Bob Rosenthal deserves as much credit as anyone for furthering what we now conveniently call "meta-analysis." In 1976, he published Experimenter Effects in Behvaioral Research, which contained calculations of many "effect sizes" (i.e., standardized mean differences) that were then compared across domains or conditions. If Bob had just gone a little further in quantifying study characteristics and subjecting the whole business to regression analyses and what-not, and then thinking up a snappy name, it would be his name that came up every time the subject is research integration. But Bob had an even more positive influence on the development of meta-analysis than one would infer from his numerous methodological writings on the subject. When I was making my initial forays onto the battlefield of psychotherapy outcome research—about which more soon—Bob wrote me a very nice and encouraging letter in which he indicated that the approach we were taking made perfect sense. Of course, it ought to have made sense to him, considering that it was not that different from what he had done in Experimenter Effects. He probably doesn't realize how important that validation from a stranger was. (And while on the topic of snappy names, although people have suggested or promoted several polysyllabic alternatives—quantitative synthesis, statistical research integration—the name meta- analysis, suggested by Michael Scriven's meta-evaluation (meaning the evaluation of evaluations), appears to have caught on. To press on further into it, the "meta" comes from the Greek preposition meaning "behind" or "in back of." Its application as in "metaphysics" derives from the fact that in the publication of Aristotle's writings during the Middle Ages, the section dealing with the transcendental was bound immediately behind the section dealing with physics; lacking any title provided by its author, this final section became known as Aristotle's "metaphysics." So, in fact, metaphysics is not some grander form of physics, some all encompassing, overarching general theory of everthing; it is merely what Aristotle put after the stuff he wrote on physics. The point of this aside is to attempt to leach out of the term "meta-analysis" some of the grandiosity that others see in it. It is not the grand theory of research; it is simply a way of speaking of the statistical analysis of statistical analyses.)

So positioned in these circumstances, in the summer of 1974, I set about to do battle with Dr. Eysenck and prove that psychotherapy—my psychotherapy—was an effective treatment. (Incidentally, though it may be of only the merest passing interest, my preferences for psychotherapy are Freudian, a predilection that causes Ron Nelson and other of my ASU colleagues great distress, I'm sure.) I joined the battle with Eysenck's 1965 review of the psychotherapy outcome literature. Eysenck began his famous reviews by eliminating from consideration all theses, dissertations, project reports or other contemptible items not published in peer-reviewed journals. This arbitrary exclusion of literally hundreds of evaluations of therapy outcomes was indefensible. It's one thing to believe that peer review guarantees truth; it is quite another to believe that all truth appears in peer reviewed journals. (The most important paper on the multiple comparisons problem in ANOVA was distributed as an unpublished ditto manuscript from the Princeton University Mathematics Department by John Tukey; it never was published in a peer reviewed journal.)

Next, Eysenck eliminated any experiment that did not include an untreated control group. This makes no sense whatever, since head-to-head comparisons of two different types of psychotherapy contribute a great deal to our knowledge of psychotherapy effects. If a horse runs 20 mph faster than a man and 35 mph faster than a pig, I can conclude with confidence that the man will outrun the pig by 15 mph. Having winnowed a huge literature down to 11 studies (!) by whim and prejudice, Eysenck proceeded to describe their findings soley in terms of whether or not statistical significance was attained at the .05 level. No matter that the results may have barely missed the .05 level or soared beyond it. All that Eysenck considered worth noting about an experiment was whether the differences reached significance at the .05 level. If it reached significance at only the .07 level, Eysenck classified it as showing "no effect for psychotherapy."

Finally, Eysenck did something truly staggering in its illogic. If a study showed significant differences favoring therapy over control on what he regarded as a "subjective" measure of outcome (e.g., the Rorschach or the Thematic Apperception Test), he discounted the findings entirely. So be it; he may be a tough case, but that's his right. But then, when encountering a study that showed differences on an "objective" outcome measure (e.g., GPA) bit no differences on a subjective measure (like the TAT), Eysenck discounted the entire study because the outcome differences were "inconsistent."

Looking back on it, I can almost credit Eysenck with the invention of meta-analysis by anti-thesis. By doing everything in the opposite way that he did, one would have been led straight to meta-analysis. Adopt an a posteriori attitude toward including studies in a synthesis, replace statistical significance by measures of strength of relationship or effect, and view the entire task of integration as a problem in data analysis where "studies" are quantified and the resulting data-base subjected to statistical analysis, and meta-analysis assumes its first formulation. (Thank you, Professor Eysenck.)

Working with my colleague Mary Lee Smith, I set about to collect all the psychotherapy outcome studies that could be found and subjected them to this new form of analysis. By May of 1975, the results were ready to try out on a friendly group of colleagues. The May 12th Group had been meeting yearly since about 1968 to talk about problems in the area of program evaluation. The 1975 meeting was held in Tampa at Dick Jaeger's place. I worked up a brief handout and nervously gave my friends an account of the preliminary results of the psychotherapy meta-analysis. Lee Cronbach was there; so was Bob Stake, David Wiley, Les McLean and other trusted colleagues who could be relied on to demolish any foolishness they might see. To my immense relief they found the approach plausible or at least not obviously stupid. (I drew frequently in the future on that reassurance when others, whom I respected less, pronounced the entire business stupid.)

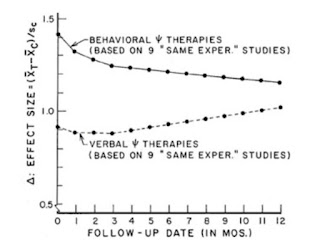

The first meta-analysis of the psychotherapy outcome research found that the typical therapy trial raised the treatment group to a level about two-thirds of a standard deviation on average above untreated controls; the average person receiving therapy finished the experiment in a position that exceeded the 75th percentile in the control group on whatever outcome measure happened to be taken. This finding summarized dozens of experiments encompassing a few thousand persons as subjects and must have been cold comfort to Professor Eysenck.

An expansion and reworking of the psychotherapy experiments resulted in the paper that was delivered as the much feared AERA Presidential address in April 1976. Its reception was gratifying. Two months later a long version was presented at a meeting of psychotherapy researchers in San Diego. Their reactions foreshadowed the eventual reception of the work among psychologists. Some said that the work was revolutionary and proved what they had known all along; others said it was wrongheaded and meaningless. The widest publication of the work came in 1977, in a now, may I say, famous article by Smith and Glass in the American Psychologist. Eysenck responded to the article by calling it "mega-silliness," a moderately clever play on meta- analysis that nonetheless swayed few.

Psychologists tended to fixate on the fact that the analysis gave no warrant to any claims that one type or style of psychotherapy was any more effective than any other: whether called "behavioral" or "Rogerian" or "rational" or "psychodynamic," all the therapies seemed to work and to work to about the same degree of effectiveness. Behavior therapists, who had claimed victory in the psychotherapy horserace because they were "scientific" and others weren't, found this conclusion unacceptable and took it as reason enough to declare meta-analysis invalid. Non- behavioral therapists—the Rogerians, Adlerians and Freudians, to name a few—hailed the meta-analysis as one of the great achievements of psychological research: a "classic," a "watershed." My cynicism about research and much of psychology dates from approximately this period.

The first appearances of meta-analysis in the 1970s were not met universally with encomiums and expressions of gratitude. There was no shortage of critics who found the whole idea wrong-headed, senseless, misbegotten, etc.

The Apples-and-Oranges Problem